Introduction

This post is about how we managed to solve the interesting problem of randomly generating more than 12 million unique codes of 8 non-ambiguous characters. This is an interesting problem as the most obvious solutions would take days to generate the numbers. Our solution was to write a very efficient program in C and make it run on a cluster of 11 computers each having a dual-core processor and 2Gb of RAM. Even so, the application took 18 hours to run but, in the end, we got our 12 million unique codes.

This post was written by Avinash Meetoo, Managing-Director, and Noorani Bakerally, Research and Development Specialist of Knowledge7.

The problem

We had been asked by a very well-known Mauritian company to generate 12,050,000 unique codes. The codes had to be unique (in the sense that it is impossible for a code to appear twice (or more) in the list and each code had to be 8 non-ambiguous characters long (no 0 or o for example).

This is an interesting problem from a technical point of view as the possible solutions all need a lot of processing power. It is also an interesting problem from a business point of view because, well, many draws and games require a lot of unique codes with only a few considered as being winning codes. Of course, it is essential that the codes be non-ambiguous (so that people can read them, write them and/or transmit them without mistakes). It is also essential that the codes be random and be selected from a very large set of possible codes (in order to decrease frauds).

Possible slow solutions

The most obvious solution is to generate a random code and, before adding it to a list, check whether the code does not already exist in the list. The complexity of this algorithm is clearly O(N2) and, even on a quick computer, would have taken years to run.

A variation is to use an algorithm which generates a random code and systematically adds it to a list. After some time, the list will have quite a lot of codes but with possible repetitions. The idea is to generate more than 12,050,000 (to account) for the repetitions and eliminate them in order to only keep the 12,050,000 unique codes needed. This algorithm is better as it runs in O(N) but, still, it would have taken more than one week to run according to our calculations.

Our quicker solution

Our quicker solution

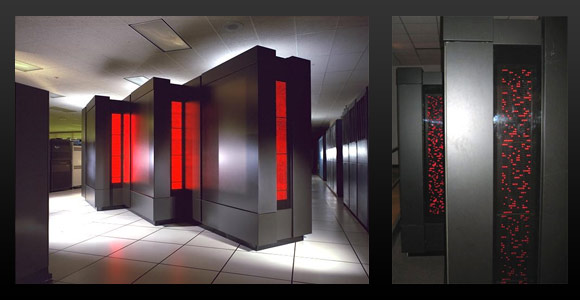

We decided to implement a concurrent program using the MPI (Message Passing Interface) library and written in C (for maximum speed). The concurrent program was made to run in parallel on a cluster of 11 Linux computers:

- The first node (rank = 0) is a master node which has as responsibility to save the list of all generated codes on disk.

- Each remaining node (rank = 1 to 10) would then generate unique codes using an algorithm devised by Jon Bentley in the classic book, Programming Pearls. The ten nodes were programmed to start generating codes from different initial points so as not to bias the results.

Strictly speaking (and this had an important impact on the speed of the algorithm), we decided to make the nodes generate long integers. The encoding was code à posteriori using a set of 32 non-ambiguous characters. The 12,050,000 codes were then statistically analysed and we found out that they were entirely random and without any repetitions.

Because of the Linux cluster, we manage to bring the run time to 18 hours instead of more than the seven days the previous version required. This allowed us to run the parallel program more than once at Knowledge7 and, each time, we got 12,050,000 entirely random codes hence validating our algorithms and architecture used.

Followups

As we are very satisfied with the performance of the program, we intend to use it again if ever we get similar demands. We also were very happy with the fact that the Linux cluster worked perfectly from day 1. It is even possible that we run a Linux Cluster Installation and Programming training in the future.

Would you be interested?

Did the computation take advantage of both cores the one machine?

We thought about that but, at the end, decided to use only one core on each of the 10 compute nodes. This was done because (1) we didn’t want to overheat the machines too much and (2) this would have complicated our MPI program.